AI and machine learning is going to change entire future

AI and machine learning is going to change entire future

If it is dealing with emergencies or diseases or development in business |Cyber security|

It was very difficult for me to understand this in the beginning. When someone told me that machine learning and artificial intelligence will change everything, I used to think how will it replace everything or will it destroy us humans?

I understood these things when I was taking an applied calculus class in mathematics.

When I took the Applied Mathematics Calculus Arithmetic class a little carefully, I found out about how machine learning will change everything.

In 2019, when the corona virus caused havoc all over the world, Germany and other countries where there were data scientists. They collected sun samples from different areas, they studied all these data and told how many diseases are coming from which place, this is what we call data cleaning and probability finding in machine learning.

Such a small display of a few lines can neatly represent several years of code-up data. If machine learning is used in the right way, we can remove ourselves from many diseases in the past. We can also see how far we can go.

Machine learning is not only here, but machine learning has become so advanced that you can even recognize what may happen on which day. Finally, all these things have to be found by probability. Yes, we cannot say that it must happen on the same day. The data of the whole year will be extracted.

Through modeling and data science, we can not only identify diseases, but also determine how often natural disasters occur and in which regions. Save yourself from the trouble we bring.

By using Data Manipulation using Pandas:

Let’s look at a simple data in which we are told how many natural disasters will occur in Afghanistan in 2022. And in which area, how much was the loss of life and we will also determine the age of the majority of those who died there.

# Import necessary libraries

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import accuracy_score

Inside Python there are some libraries like pandas and so on. Which is to make your job easier, actually the libraries are already set up on top of a mathematical calculation that doesn’t let you worry too much about the fact that you have to do a lot of mathematical calculations, just replace some values. have to do.

print(df.head())

# Plotting the data

fig, axs = plt.subplots(2, 2, figsize=(12, 10))

# Plot 1: Persons killed and injured

axs[0, 0].bar(df['INC_TYPE'], df['Persons_killed'], color='red', alpha=0.7, label='Persons Killed')

axs[0, 0].bar(df['INC_TYPE'], df['Persons_injured'], color='blue', alpha=0.7, label='Persons Injured')

axs[0, 0].set_title('Persons Killed and Injured')

axs[0, 0].set_xlabel('Incident Type')

axs[0, 0].set_ylabel('Count')

axs[0, 0].legend()

# Plot 2: Families affected

axs[0, 1].bar(df['INC_TYPE'], df['Families_affected'], color='green', alpha=0.7)

axs[0, 1].set_title('Families Affected')

axs[0, 1].set_xlabel('Incident Type')

axs[0, 1].set_ylabel('Count')

# Plot 3: Individuals affected

axs[1, 0].bar(df['INC_TYPE'], df['Individuals_affected2'], color='orange', alpha=0.7)

axs[1, 0].set_title('Individuals Affected')

axs[1, 0].set_xlabel('Incident Type')

axs[1, 0].set_ylabel('Count')

# Plot 4: Houses damaged and destroyed

axs[1, 1].bar(df['INC_TYPE'], df['Houses_damaged'], color='purple', alpha=0.7, label='Houses Damaged')

axs[1, 1].bar(df['INC_TYPE'], df['Houses_destroyed'], color='pink', alpha=0.7, label='Houses Destroyed')

axs[1, 1].set_title('Houses Damaged and Destroyed')

axs[1, 1].set_xlabel('Incident Type')

axs[1, 1].set_ylabel('Count')

axs[1, 1].legend()

plt.tight_layout()

plt.show()

Implementation of machine learning model in PenTesting:

I realise somehow I need to work on machine learning to protect something amazing the help me in daily routine.

To be honest the result was quite amazing.

My honest opinion about the machine learning and data scientist it is amazing it’s quite annoying. thing when you have a big amount of data for example you have around million of records that is quite hard to scan and we cannot every time purchase different kind of scripts and tools online.

a few example that was helping me the figure out and have me to “generate big amount of revenue” so I decided to write about different kind of machine learning model script that will help me to protect exact amount

I developed a web scraper and automation script for collecting internet data. The script adjusts user agents and IP protocols to address challenges in machine learning and artificial intelligence.

It extracts data from Google, saves it in a CSV file, and tests different user agents imported from a CSV to identify accurate results. This process helped efficiently test 28,000 proxies from PayPal.

the first of all I will import a pandas as a pd and some other libraries and some cares I can use

numpy but when we all set this all things we have

to drop all the duplicates

import pandas as pd

df = pd.read_csv(r"paypal.csv")

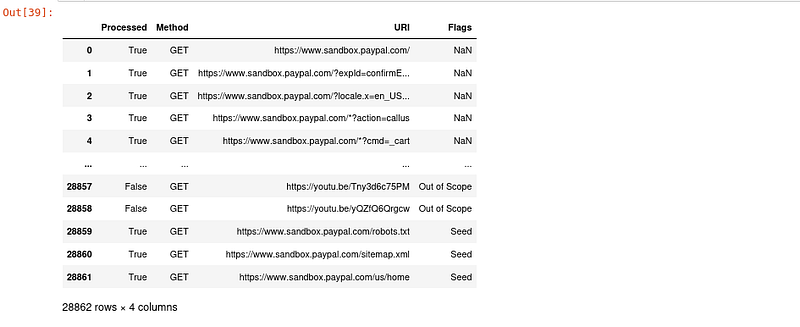

in This case you can see there is around “28862 rows × 4 columns ”urls that was quite a big amount by using Panda data frame library.

I can drop all the columns you can do this all thing using Google spreadsheet Microsoft any other tool but your own resources can be safe your much much time that’s why we prefer to you that can understand your logic.

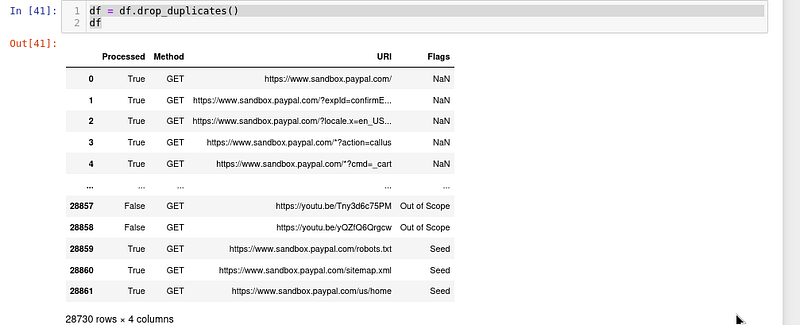

df = df.drop_duplicates()

df

for x in df.index:

if df.loc[x , 'Flags' ] == 'Out of Scope':

df.drop(x , inplace=True )

df

after dropping a big number of Rose I get a clean data that was not much clean that I was thinking but Quite better first I remove all the duplicate then I decided to remove out of the scope to Domains

20230 rows × 4 columns

df = df.reset_index(drop=True)

df

Filtering a big amount of data of subdomains to find possibility of submit takeover.

I have already right a couple of articles about to finding the possible t of subdomen if you haven’t check it out so you can check it in this case I am going to use up exist record that .I have already the record has already lot of junk that I will probably remove it

To be Conten

0 Comments